In the midst of the hand wringing over the use of artificial intelligence in academic writing, one fundamental truth seems to be getting lost: scientific papers exist to advance knowledge, not to pass a style audit or some sort of forensic analysis by not too keen peer reviewers. Whether a paper was written with the help of AI, edited by a colleague, or typed out manually at 2 a.m. is entirely irrelevant if the work it communicates is original, rigorous, and contributes meaningfully to the field.

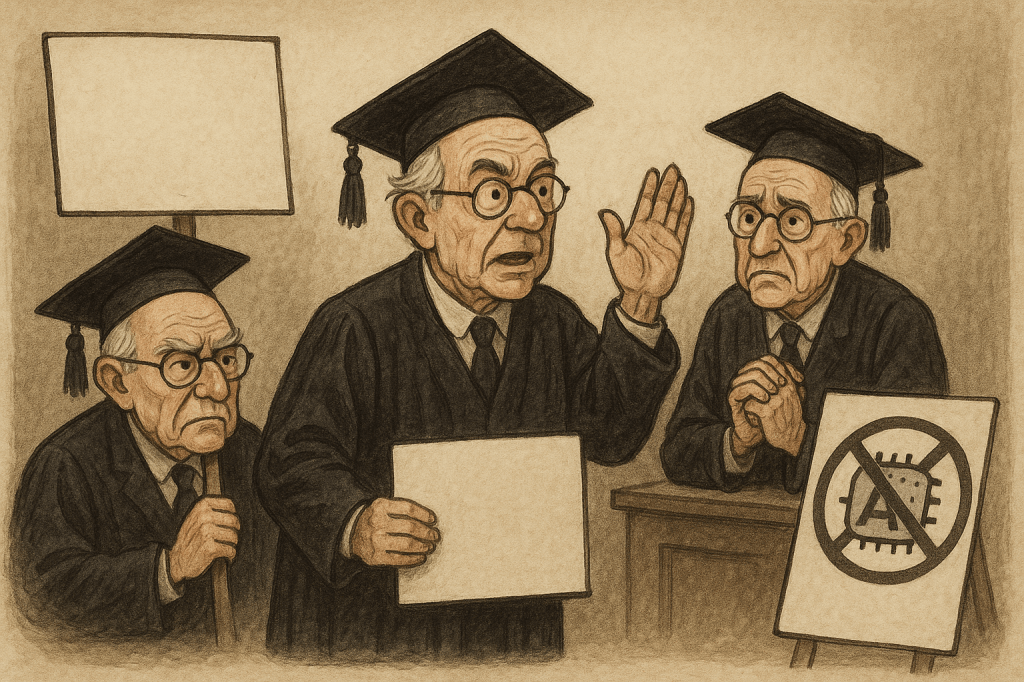

And yet, paradoxically, many journals and peer reviewers remain locked in a self-defeating contradiction, where they claim to defend the sanctity of originality in research, while simultaneously enforcing rigid, performative standards of academic prose and citation that actively discourage innovation and insight. In doing so, they create a culture where form and appearance of science is privileged over function and actual scientific progress, where how a paper is written matters more than what it says.

The Real Purpose of a Scientific Paper

At its core, a scientific paper serves one purpose: to communicate the findings of a research process that either advances a theoretical understanding or offers a practical solution. The writing is the vehicle, not the destination.

We do not ask whether a microscope or a statistical software suite as the used in statistical analysis tainted the “authenticity” of a result, while a few reviewers, if any, actually checks whether the presented statistics add up. Yet we ask that of writing tools like AI, even when they’re used merely to structure or polish a piece, or help the writer better articulate complex ideas. The growing fixation on how a text is generated often obscures a more critical question: does the research move the field forward? We have reached the summit of ridiculousness by even questioning (and wasting journals’ space) if an abstract was written by AI (let me be as plain as possible: if you are not writing your abstracts using AI, the time consumed in writing an abstract should be discounted from your salary!).

Original Thought vs. Citation Performance

This problem is compounded by a deep contradiction in the peer review process. On one hand, reviewers and editors bemoan the lack of originality in submissions, urging authors to offer novel perspectives. On the other, they often reject papers that stray too far from the citation dense orthodoxy of academic writing, particularly when those papers provide fresh, compelling insights (they even call them “opinion pieces”). While it is important to have a robust literature review to show that what is the state of the art in a particular field, not all the research should be about literature, if not, better leave to AI to do the summary.

If a paper dares to deviate from the one citation per sentence model, or it synthesises across disciplines in a way that doesn’t fit the journal’s rigid schema, it risks an almost certain rejection not on scientific grounds, but stylistic ones, disguised as science. This pressure has only intensified in the AI era, where any sign of syntactic uniformity is now suspiciously scrutinised, as if clarity were a symptom of machine authorship, which in addition discriminates against the multilingual, who tend to use more flourished language, and some of the words usually employed by AI. Not forgetting that the so called AI uses probabilistic analysis based on what it has “learned” from previous papers, so if it uses a lot the word “nuance”, as I have done for years, it is because “nuance” has been used a consistently in previous mostly non AI papers, thus, using it now would also be highly probable even if not writing using AI. What it probably changed, is that those making now that important research about integrity, have never read or counted the papers those words before, for a variety of reasons, likely stylistic or for not being their type of science (which is based mainly about citations and not necessarily about original thought) .

The AI Moral Panic

The moral panic surrounding AI in academia is understandable but misdirected. Concerns about plagiarism, ghostwriting, and the erosion of critical thinking are valid, but they aren’t new, they’re just taking a new form, and the idea that now has increased substantially needs to be proved with the same rigour that those doing that research are demanding from the rest. The value of AI, like any tool, depends entirely on how it is used, and claiming that a change in the use of certain words implies the prevalence of some form of academic dishonesty is far more less rigorous and unscientific that many (most?) papers written using the aid of AI.

Using AI to fabricate results is fraud, plain and simple; using it to simply summarise what others have done and presenting as original is wrong, no discussion about that (although it seems to be preferred my some reviewers). But using AI to help articulate a novel, original contribution is no different from using grammar checking software or a thesaurus. Rejecting a paper on the mere suspicion that “AI helped with the wording” is akin to rejecting a paper because the figures were “too polished”, it is a non sequitur.

Reclaiming the Purpose of Academic Publishing

We need to return to a basic question in academic publishing: is this paper advancing the field? Is it offering a solution to a real theoretical or practical problem? Does it demonstrate methodological integrity? Is it grounded in evidence, even if it doesn’t reference every single author who ever touched the topic?

If the answer is yes, then the prose style, the citation density, and the grammatical polish are secondary at best. Reviewers should be encouraged to focus on the substance, not the scaffolding, although it is clear that it requires real effort by peer reviewers, instead of simply counting the number of references. Furthermore, the argument that writing properly is part of serious science or academia is as ridiculous as the one heard decades ago when having a good handwriting was also seen as a requirement to be a scholar.

At the end of the day, AI is not the enemy of science, rigid, anti-innovative gatekeeping is. Let’s not mistake performance for insight, or style for substance, as the the health of scientific inquiry depends on our ability to recognise and reward originality and rigour, no matter what tools helped communicate them.

(the subtitles were suggested by AI 😉 although the ideas are sadly mine)